arXiv pre-print View it on Github Demo

An RGB and Event Aligned Latent Manifold for Cross-Modal Perception

Vincenzo Polizzi1, David B. Lindell2, Jonathan Kelly1

1University of Toronto, Robotics Institute

2University of Toronto, Department of Computer Science

Abstract

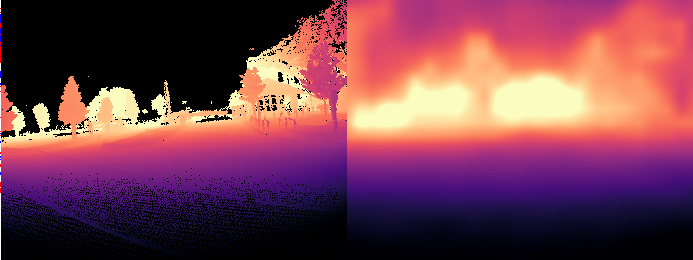

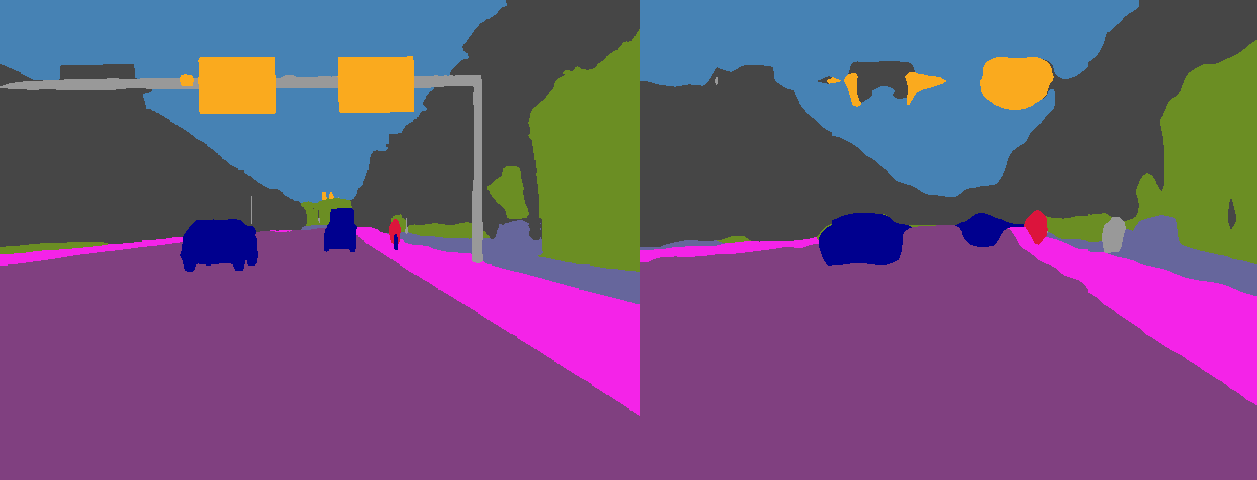

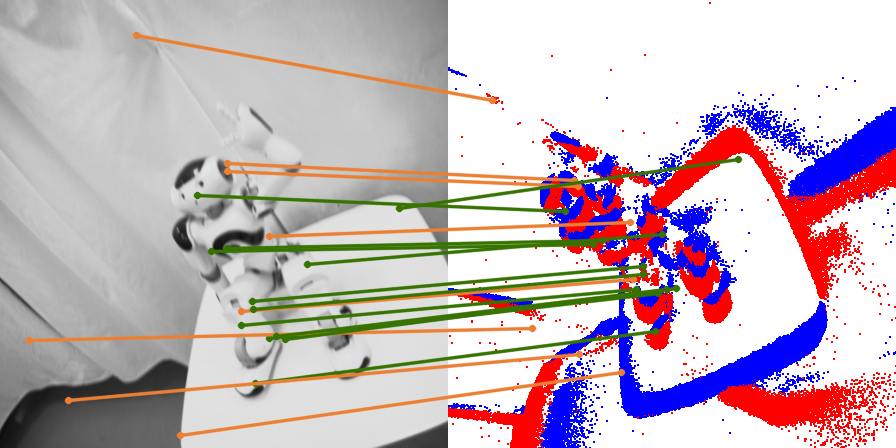

Event cameras provide several unique advantages over standard frame-based sensors, including high temporal resolution, low latency, and robustness to extreme lighting. However, existing learning-based approaches for event processing are typically confined to narrow, task-specific silos and lack the ability to generalize across modalities. We address this gap with REALM, a cross-modal framework that learns an RGB and Event Aligned Latent Manifold by projecting event representations into the pretrained latent space of RGB foundation models. Instead of task-specific training, we leverage low-rank adaptation (LoRA) to bridge the modality gap, effectively unlocking the geometric and semantic priors of frozen RGB backbones for asynchronous event streams. We demonstrate that REALM effectively maps events into the ViT-based foundation latent space. Our method allows us to perform downstream tasks like depth estimation and semantic segmentation by simply transferring linear heads trained on the RGB teacher. Most significantly, REALM enables the direct, zero-shot application of complex, frozen image-trained decoders, such as MASt3R, to raw event data. We demonstrate state-of-the-art performance in wide-baseline feature matching, significantly outperforming specialized architectures.

Interactive Demo

Try REALM on your own data. Upload an RGB image and an event voxel grid (see github repo on how to obtain it).

Qualitative Results on Downstream Tasks

Cite this work

@misc{polizzi_2026_realm,

title={REALM: An RGB and Event Aligned Latent Manifold for Cross-Modal Perception},

author={Vincenzo Polizzi and David B. Lindell and Jonathan Kelly},

year={2026},

eprint={},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/},

}